F-test statistic is the ratio of two variance and if the value of F-test statistic equals to 1 that means that those two variance are equal and we accept the null hypothesis.But when we want to check the equality of several mean we perform F-test.Here F-test statistic is the ratio of between mean sum square and within mean sum square.

My question is ,why these two variances has to be equal , if they came from same population? How F-test check the equality of several mean?

$\endgroup$2 Answers

$\begingroup$The $F$ statistic is the ratio of the between-group variance to the within-group variance. If this test statistic is large, this suggests that the variation between groups is much higher than the variation within a group; therefore, it is likely that not all of the group means are equal to each other.

Conversely, if all of the group means are equal to each other, you would find that the between-group variance is not appreciably large compared to the within-group variance. (Again, note that this is assuming that data are normally distributed.) You would have a small test statistic, failing to exceed the critical value, and the test would not furnish evidence to suggest the group means differ. That is not the same as affirming that the group means are the same: failure to reject the null hypothesis does not mean the null hypothesis is true. It only means that there is insufficient evidence to reject.

We NEVER say "accept the null hypothesis." This is because the test statistic is calculated under a specific distributional assumption that the null hypothesis is true; therefore, the resulting $p$-value of the test is a conditional probability. You cannot "accept $H_0$" if the decision rule you used to determine the result of your test is based on assuming $H_0$ is true in the first place.

See the F-test Wikipedia article for more information.

$\endgroup$ $\begingroup$Note: The answer by @Heropup appeared while I was typing this. I suggest you read it first. My answer is similar, but with more technical detail.

I suppose you are considering the standard ANOVA model $$Y_{ij} = \mu + \alpha_i + e_{ij},$$ where $i = 1, \dots, g$ independent groups and $j = 1, \dots, n$ replications within each group. Also, $e_{ij} \stackrel{IID}{\sim} Norm(0, \sigma).$

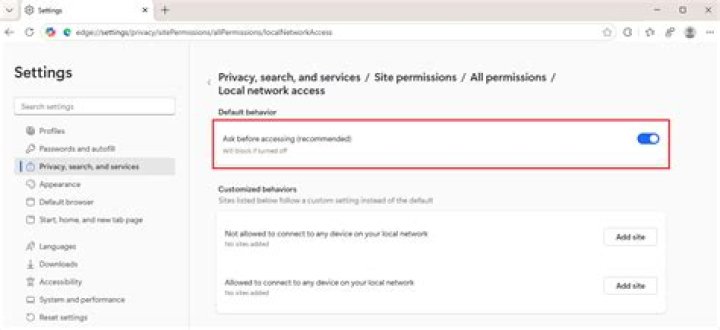

Then the $F$-test that all groups have equal population means is the ratio of $MS(Factor) = \frac{n}{g-1} \sum_{i=1}^g(\bar Y_{i \cdot} - \bar Y_{\cdot \cdot})^2$ and $MS(Error) = \frac 1 g\sum_{i=1}^g s_i^2.$ Here $\bar Y_{i\cdot}$ are the $g$ group means, $\bar Y_{\cdot \cdot}$ is the grand mean of all $gn$ observations, and $s_i^2$ are the $g$ group standard deviations.

Each of the $g$ sample variances $s_i^2$ is an estimate of $\sigma^2$ so $E[MS(Error)] = \sigma^2.$

If $H_0$ is true, then all $\alpha_i = 0$ and each $Y_{i\cdot} \sim Norm(\mu, \sigma/\sqrt{n}).$ Thus their sample variance $\frac{1}{g-1} \sum_{i=1}^g(\bar Y_{i \cdot} - \bar Y_{\cdot \cdot})^2$ is an unbiased estimate of $\sigma^2/n$ and $E[MS(Factor)] = \sigma^2.$

So under $H_0$, $F = MS(Factor)/MS(Error)$ is the ratio of two estimates of $\sigma^2.$ If $H_0$ is not true, then $E[MS(Factor)] > \sigma^2$ because it contains positive term involving the $\alpha_i$.

Notice that $MS(Factor)$ is computed using only sample means and $MS(Error)$ is computed using only sample variances. Thus the numerator and denominator of $F$ are independent. (For normal data, the sample mean and the sample variance are stochastically independent.)

One can show that $(g-1)MS(Factor) \sim Chisq(g-1)$ and'that $g(n-1)MS(Error) \sim Chisq(g(n-1)),$ and thus that the F-ratio has the distribution $F(g-1,g(n-1)).$

$\endgroup$