What does it mean if $\det(A)$ equals $1$? Does it mean that the identity matrix can be obtained from $A$ by only adding multiples of rows onto others?

$\endgroup$ 64 Answers

$\begingroup$Matrices with determinant $1$ preserve volume. If all points inside a shape are transformed by the matrix to form a new shape, the proportional change in area (or volume) is the determinant of the matrix. For example if the determinant of a matrix $A$ is $5$, then a unit cube will transform into a shape with volume $5 \times 1=5$. The identity matrix would transform a unit cube into a shape with volume $1 \times 1 = 1$.

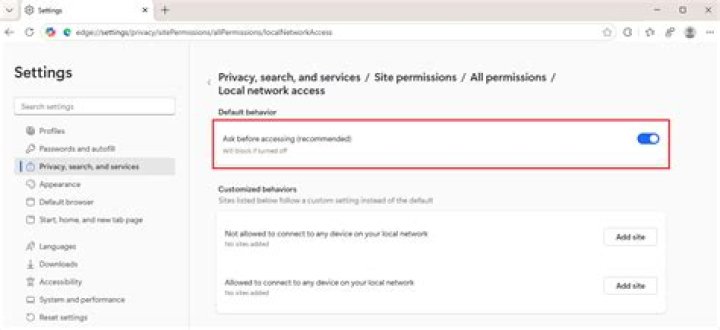

Added

After i read the appreciated comment of @danielV, i added this image from Determinant and linear transformation to give more explanations.

It represents the transform of a unit square by a determinant 4 matrix. Notice that the area of the new square is $1 \times 4 = 4$.

$\endgroup$ 2 $\begingroup$Usually when we talk about the determinant without any other information attached, the only really relevant information is whether or not it is zero. However, if we are interested in geometry, there is some significance to matrices with determinant $1$. Namely, an important subset of them form the so-called special orthogonal group, which is just a fancy way to say they are like generalizations of rotations.

[note: As mentioned in the comments, I was a bit too eager. There are (many) matrices of determinant $1$ which are not special orthogonal. I do not know of any signficant properties that all matrices of determinant $1$ possess, except that they are volume-preserving, as noted by Semsem.]

For example, matrices of the form $$\begin{pmatrix}\sin\theta & \cos\theta \\ -\cos\theta & \sin\theta \end{pmatrix}$$ have determinant 1, and you can prove these correspond precisely to a rotation by $\theta$ (can't remember if it's clockwise or counterclockwise when $\sin$ is in the upper-left).

What properties of rotations do they possess? To answer this question, you need some familiarity with a matrix not just as a tool for putting together a system of equations -- which is how I was first taught them, unfortunately. You can think of them as really being functions that take in vectors and spit out other vectors, and these corrospond to geometric transformations of the vector space (so, the plane, or three-dimensional space, or beyond). I'm not sure what level you're coming at this from; I just mean to say that there are several ways of looking at matrices and one of them is a bit more abstract (so not taught in some classes) but is good for understanding why determinant-one matrices are like rotations.

First, they are orientation preserving which just means that a right-handed system never turns into a left-handed system. Or, no picture is mapped under the transformation to its mirror image (unless it was already symmetric). They are also distance-preserving in that points which were $d$ distance apart before being acted upon remain $d$ distance apart afterwards.

For what it's worth, Sabyasachi's construction is valid even if only row manipulations are allowed. However, see the comments for some subtleties about row-reduction; some minor definitions can change your ability to do or not do this.

$$A = \begin{pmatrix}-1 & 0 \\ 0 & -1\end{pmatrix}$$

$$R_2 \to R_2 - R_1$$

$$\begin{pmatrix}-1 & 0 \\ 1 & -1\end{pmatrix}$$

$$R_1 \to R_1 - R_2$$

$$\begin{pmatrix}0 & -1 \\ 1 & -1\end{pmatrix}$$

$$R_2 \to R_2 - R_1$$

$$\begin{pmatrix}0 & -1 \\ 1 & 0\end{pmatrix}$$

$$R_1 \to R_1 + R_2$$

$$\begin{pmatrix}1 & -1 \\ 1 & 0\end{pmatrix}$$

$$R_2 \to R_1 + R_2$$

$$\begin{pmatrix}1 & -1 \\ 2 & -1\end{pmatrix}$$

$$R_2 \to R_2 - 2R_1$$

$$\begin{pmatrix}1 & 0 \\ 0 & 1\end{pmatrix}= I_2$$

$\endgroup$ 2 $\begingroup$NOTE: My original answer was about a different question. (I have considered only adding integer multiples of some row.) I have completely changed it, hopefully the new answer is correct.

Other answers have already gave explanations about geometric meaning of $\det(A)=1$, so let me address the questions about row operations.

Let us start by checking what can be obtain from some given regular matrix $A$ using only one type of row operations. (Adding multiple of some row to another row.)

We can exchange rows, with changing signs of one of them

If we want to exchange rows $\vec\alpha_1$ and $\vec\alpha_2$ we can proceed like this: $$\begin{pmatrix}\vec\alpha_1\\\vec\alpha_2\end{pmatrix}\sim \begin{pmatrix}\vec\alpha_1\\\vec\alpha_1+\vec\alpha_2\end{pmatrix}\sim \begin{pmatrix}-\vec\alpha_2\\\vec\alpha_1+\vec\alpha_2\end{pmatrix}\sim \begin{pmatrix}-\vec\alpha_2\\\vec\alpha_1\end{pmatrix}.$$

We can get from $A$ a diagonal matrix.

We proceed similarly as with Gaussian elimination, only we are not allowed to multiply rows, so we do not get $1$'s on diagonal, instead we obtain some non-zero elements.

We inductively repeat these two steps:

- If in the $k$-th step the element $b_{kk}$ is non-zero we use it to get zeroes in all other rows in the $k$-th column.

- If $b_{kk}=0$, at least one of elements $b_{k,k+1},\dots,b_{k,n}$ must be non-zero. (Otherwise we would have $\det(A)=0$.) We exchange rows in a such way that we get non-zero number on this position. Then we can proceed as before.

From the diagonal matrix, we can get identity matrix $I$.

We inductively repeat steps like these: $$\begin{pmatrix}d_1&0\\0&d_2\end{pmatrix}\sim \begin{pmatrix}d_1&0\\1&d_2\end{pmatrix}\sim \begin{pmatrix}0&-d_1d_2\\1&d_2\end{pmatrix}\sim \begin{pmatrix}0&-d_1d_2\\1&0\end{pmatrix}\sim \begin{pmatrix}1&-d_1d_2\\1&0\end{pmatrix}\sim \begin{pmatrix}1&0\\0&d_1d_2\end{pmatrix}$$

By repeating such step several times we can get to $$\begin{pmatrix} 1 & 0 & \dots & 0 & 0 \\ 0 & 1 & \dots & 0 & 0 \\ \ldots & \ldots & \ddots & \ldots & \ldots \\ 0 & 0 & \dots & 1 & 0 \\ 0 & 0 & \dots & 0 & d_1d_2\dots d_n \end{pmatrix}= \begin{pmatrix} 1 & 0 & \dots & 0 & 0 \\ 0 & 1 & \dots & 0 & 0 \\ \ldots & \ldots & \ddots & \ldots & \ldots \\ 0 & 0 & \dots & 1 & 0 \\ 0 & 0 & \dots & 0 & 1 \end{pmatrix}=I_n $$

$\endgroup$ 3 $\begingroup$Saying $\det(A) = 1$,means A is the determinant is

$$ \begin{matrix} a_{11}&a_{12}&\cdots &a_{1n}\\ a_{21}&a_{22}&\cdots &a_{2n}\\ \vdots&\vdots&\ddots &\vdots\\ a_{n1}&a_{n2}&\cdots &a_{nn}\\ \end{matrix} $$ and the determinant evalutes to one. Since $\det(A) = \det(I)$, $A = I_n$ where $I_n$ is the identity matrix of n rows.

Therefore, by row manipulation should in principle be able to yield the identity matrix, but it is hard to say how complicated the manipulations have to be.

In response to k.stm's question about one particular matrix in a comment below OP's question.

$$A = \begin{matrix}-1 & 0 \\ 0 & -1\end{matrix}$$

$$C_1 \to C_1 - C_2$$

$$A = \begin{pmatrix}-1 & 0 \\ 1 & -1\end{pmatrix}$$

$$R_1 \to R_1 - R_2$$

$$A = \begin{pmatrix}0 & -1 \\ 1 & -1\end{pmatrix}$$

$$C_1 \to C_1 - C_2$$

$$A = \begin{pmatrix}1 & -1 \\ 2 & -1\end{pmatrix}$$

$$R_2 \to R_2 - 2R_1$$ and $$R_1 \to R_1 + R_2$$

$$A = \begin{pmatrix}1 & 0 \\ 0 & 1\end{pmatrix}$$

and voila!

$\endgroup$ 16